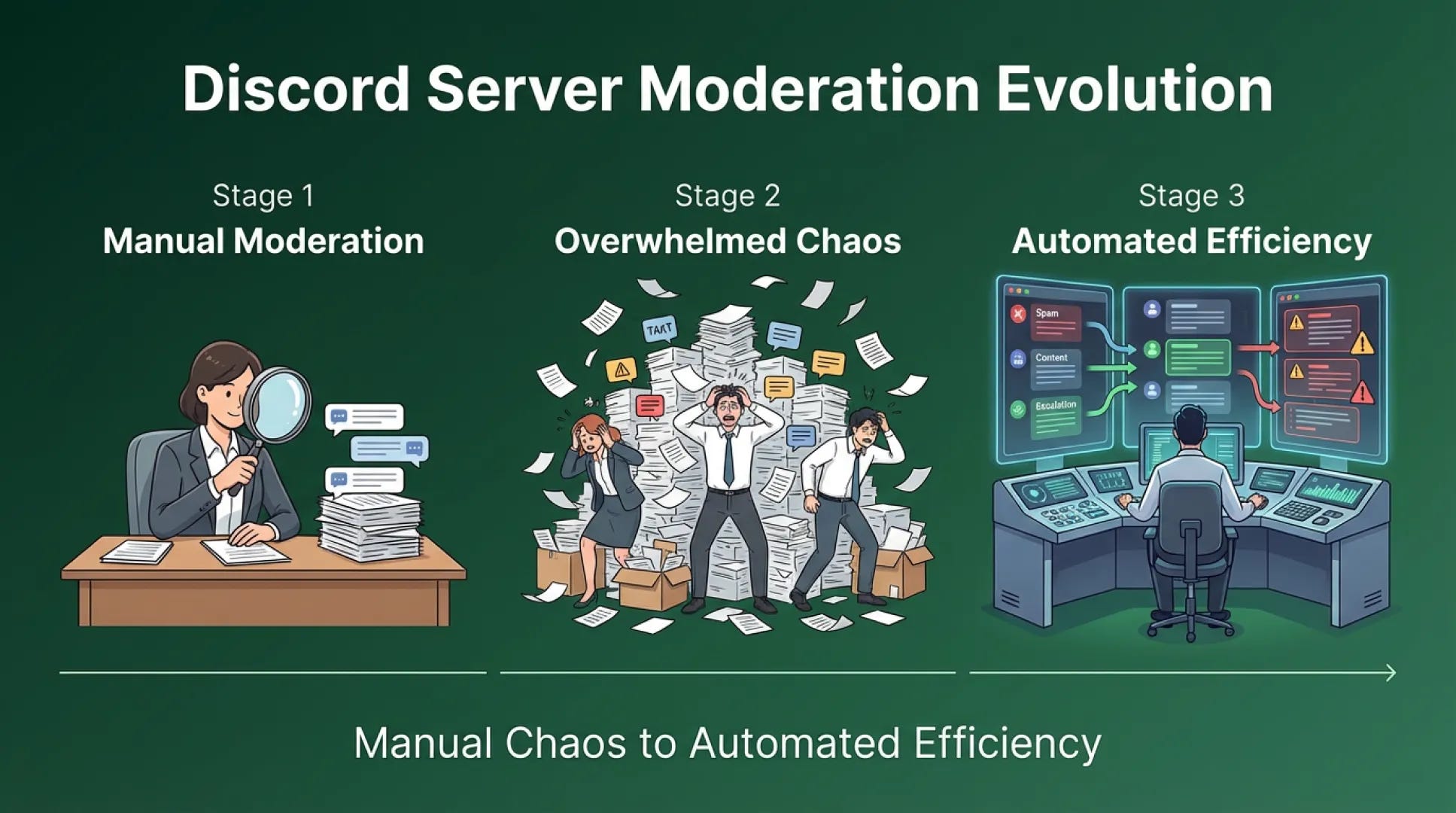

Your Discord community just passed 400 members. Your single moderator who handled everything at 100 members is now underwater. Messages come in faster than she can read them. Spam sits in channels for hours. Someone posts a referral scam and it takes 45 minutes before anyone notices. A conflict escalates because no moderator saw it starting.

You hire two more moderators. Now you have three people manually monitoring channels. Coverage improves slightly but the fundamental problem persists. Manual monitoring doesn't scale with community growth.

The math is unforgiving. At 100 members posting an average of 5 messages daily, that's 500 messages. One moderator can scan that. At 500 members with the same average, that's 2500 messages. Even three moderators struggle to monitor that volume effectively. At 1000 members, you're looking at 5000 messages daily and no reasonable number of human moderators can manually review every one.

Manual moderation fails at scale because humans process information linearly while message volume compounds exponentially. Your moderation needs don't grow proportionally with membership. They grow exponentially because more members means more messages and more messages means more potential violations.

The solution is automated moderation infrastructure that catches pattern violations while human moderators handle situations requiring judgment.

Automated moderation operates on rules and patterns. Spam follows patterns. Scam links follow patterns. Slurs and harassment follow patterns. Automated systems can detect these patterns instantly across thousands of messages. Human moderators can't.

I managed a creator community that grew from 200 to 1200 members in four months. At 200 members, one moderator handled everything manually. She'd check channels throughout the day, remove spam, address conflicts, enforce rules. It took roughly 90 minutes daily.

At 600 members, she was spending 6 hours daily on moderation and still missing things. We hired another moderator. Two people spending 6 hours daily each. The community was growing but moderation costs were exploding.

We implemented automated moderation. Auto filters for spam using pattern detection. Keyword lists for slurs, scams, and referral links with automatic message deletion. New account age restrictions preventing fresh accounts from posting immediately. Automated warnings for first time minor violations.

Moderation time dropped from 12 person hours daily back to 2 person hours. The automation caught 90% of rule violations automatically. Human moderators focused on the 10% requiring judgment: nuanced conflicts, context dependent situations, edge cases.

As the community grew to 1200 members, moderation time stayed at 2 person hours daily. The automation scaled with community size while human workload remained constant.

The practical implementation uses Discord moderation bots. Tools like AutoMod, Carl bot, or Dyno provide spam filtering, keyword detection, and automated actions. You configure rules based on your community guidelines. The bot enforces those rules automatically.

Basic automated moderation includes spam filters that detect repetitive messages or suspicious links, keyword detection that flags or removes messages containing prohibited words, new account restrictions that limit posting permissions for accounts under a certain age, and automated warnings that notify members about rule violations without moderator intervention.

The human moderator role shifts from monitoring everything to handling escalations. They don't read every message. They respond when the automation flags something requiring judgment. They handle appeals on automated actions. They address conflicts that automation can't resolve.

Some community managers resist automation because they worry about false positives. This concern is valid but manageable. You tune your filters based on results. Start conservative with obviously bad patterns. Monitor for false positives. Adjust as needed. Members can appeal automated actions if the system makes mistakes.

The alternative is hiring moderators infinitely as you grow or accepting that moderation coverage will degrade. Neither option is sustainable.

The timing matters. Implement automated moderation before you need it. If you wait until your moderators are drowning, you're implementing under crisis conditions. Set up automation when moderation is manageable so you can tune it properly.

The threshold is around 300 members. Below that, manual moderation is fine. Above that, you need automation infrastructure. Don't wait until 800 members to implement what should have been in place at 300.

Your competitors are trying to scale manual moderation and burning out their teams. Their communities feel unsafe because violations sit for hours. You can build automated infrastructure that keeps communities safe without exponentially increasing moderation costs.

Manual doesn't scale. Systems do. Automate pattern detection. Preserve humans for judgment calls. Your community stays safe and your team stays sane.

Safety at scale requires automation. Human judgment stays essential. Both together create sustainable moderation.